Setup

Human morality is a set of cognitive devices designed to solve social problems. The original moral problem is the problem of cooperation, the “tragedy of the commons” — me vs. us. But modern moral problems are often different, involving what Harvard psychology professor Joshua Greene calls “the tragedy of commonsense morality,” or the problem of conflicting values and interests across social groups — us vs. them. Our moral intuitions handle the first kind of problem reasonably well, but often fail miserably with the second kind. The rise of artificial intelligence compounds and extends these modern moral problems, requiring us to formulate our values in more precise ways and adapt our moral thinking to unprecedented circumstances. Can self-driving cars be programmed to behave morally? Should autonomous weapons be banned? How can we organize a society in which machines do most of the work that humans do now? And should we be worried about creating machines that are smarter than us? Understanding the strengths and limitations of human morality can help us answer these questions.

- 2017 Festival

- Society

Explore More

Society

For years, Yale undergraduate students have lined up to take a wildly popular course called Life Worth Living. Bucking the highly competitive tone you might expect at an Ivy L...

Global conflicts and health crises have put into stark relief deeply-ingrained gender roles in society. Yet the past years have also seen record-high numbers of women running...

After millennia of human existence, we’re still figuring out and talking constantly about one of our most fundamental behaviors – sex. Despite the sexual revolution of the 60s...

Teenagers and young adults today are dealing with challenges their parents never experienced and couldn’t have prepared for. Nobody has a map and the road to resolution can be...

The unflinching humanity and morality that Martin Luther King, Jr. embodied is part of what makes his legacy so lasting. In addition to his preeminent civil rights work, he sp...

Whether you love setting New Year’s resolutions or ignore them entirely, there’s still a certain mix of nostalgia and excitement over the ending of one year and the possibilit...

Living a happy life isn’t as simple as having a smile on your face all the time. We often think that our negative emotions should be minimized and repressed, but acknowledging...

The human capacity for empathy allows us to communicate, collaborate and understand each other. But we all know empathy isn’t always easy, and we can feel worn down by the eff...

When Duke divinity school professor Kate Bowler wrote her best-selling memoir, “Everything Happens for a Reason (and Other Lies I’ve Loved),” she was grappling with the conseq...

For adults, the pressure to drink at social engagements, work events, restaurants or almost anywhere outside the home can feel constant. Recent research has found that “no amo...

In today’s world, we tend to switch jobs more frequently than previous generations, and are more likely to have multiple jobs. Side gigs where we express passions or find mean...

Finding ways to ground ourselves on a planet too often in turmoil can foster the resilience we need to function at our best. By maintaining close personal ties, learning new s...

Philosophers throughout history have debated what it means to live a good life, and it remains an ongoing and unresolved question. Deep personal relationships, fulfilling work...

You may have heard of Dry January and mocktails, but what is being "sober curious" really about? Sans Bar's Chris Marshall explains the growing movement and shares how he's b...

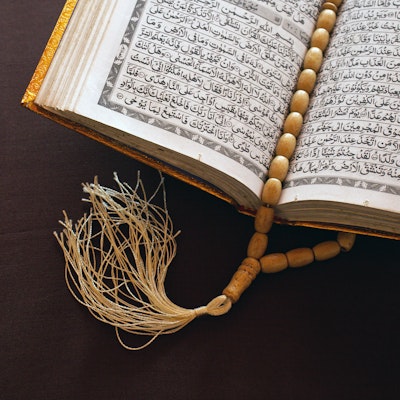

The United States is a more secular society than many, and the percentage of people who don’t identify with organized religion is rising. Some of the impacts from that shift m...

About two decades ago, NPR host Mary Louise Kelly had her first child and went down the extremely common yet commonly daunting life path of balancing a demanding career with a...

Everyone has a story to tell – and sharing them reminds us of our common humanity. Few know this better than StoryCorps CEO Sandra Clark. Over the last 20 years, the organizat...

It's been a big year for Patagonia, as it celebrated a 50th anniversary and legally restructured to commit all profits to environmental causes. What can be learned from the co...

Artificial intelligence is clearly going to change our lives in multiple ways. But it’s not yet obvious exactly how, and what the impacts will be. We can predict that certain...

Advocates, healthcare providers, legislators, researchers, and venture capitalists are bringing the unique health needs of women to light – from vigorous policy debates on iss...